Shopping malls collect more visitor data than ever before — footfall counts, dwell times, zone heatmaps, queue lengths. Yet many still operate in a legal grey area when it comes to GDPR video analytics compliance. The paradox is clear: retailers need granular data to compete, but the regulation that protects consumers can also shut down entire analytics programmes overnight. This guide breaks down exactly what the GDPR requires for video analytics in shopping malls, where the real risks lie, and how to achieve full compliance without sacrificing insight.

⏱ 11 min read

📊 Sources: GDPR, EDPB Guidelines, EU AI Act, Flame Analytics

(or 4% of annual turnover)

with Flame Hypersensor

Flame’s privacy-first approach

1. The compliance paradox: retailers need data but fear GDPR

Shopping mall managers face a difficult tension. On one side, tenants demand footfall reports, conversion metrics, and zone-level performance data to justify rents and optimise store placement. On the other, data protection authorities across Europe are increasing scrutiny of video surveillance systems used for purposes beyond basic security.

The result? Many malls either avoid analytics altogether — losing competitive intelligence — or deploy systems without proper legal review, exposing themselves to fines of up to €20 million or 4% of global annual turnover. Neither approach is sustainable.

The good news: GDPR compliance and powerful retail analytics are not mutually exclusive. The key lies in understanding what the regulation actually prohibits versus what it permits — and choosing technology designed with privacy at its core.

Key insight: The GDPR does not ban video analytics. It bans the processing of personal data without a lawful basis. The distinction between analytics that process personal data and those that don’t is where compliance — or non-compliance — begins.

2. GDPR fundamentals for video analytics

Before diving into compliance strategies, it is essential to understand which GDPR articles directly affect video analytics in retail environments.

| GDPR Article | What it covers | Impact on video analytics |

|---|---|---|

| Art. 6 — Lawful basis | Six legal grounds for processing personal data | Legitimate interest is the most common basis for CCTV; analytics requires separate assessment |

| Art. 9 — Special categories | Biometric data processed to uniquely identify a person | Triggers strict prohibition unless explicit consent or specific exemptions apply |

| Art. 13/14 — Transparency | Information to be provided to data subjects | Signage, privacy notices, and layered information required at every entry point |

| Art. 25 — Data protection by design | Privacy embedded into system architecture | Analytics platform must minimise data collection by default |

| Art. 35 — DPIA | Data Protection Impact Assessment | Mandatory for large-scale video monitoring of publicly accessible areas |

| Art. 37 — DPO | Data Protection Officer appointment | Required when core activities involve large-scale monitoring |

The European Data Protection Board (EDPB) published Guidelines 3/2019 specifically addressing video surveillance. These guidelines clarify that video footage of identifiable individuals constitutes personal data — even if you never intend to identify anyone. This is a critical point: intent does not determine compliance; capability does.

For shopping malls, the EDPB guidelines explicitly state that a Data Protection Impact Assessment (DPIA) is mandatory when deploying video analytics in publicly accessible spaces. This applies regardless of whether the system uses AI or simple motion detection.

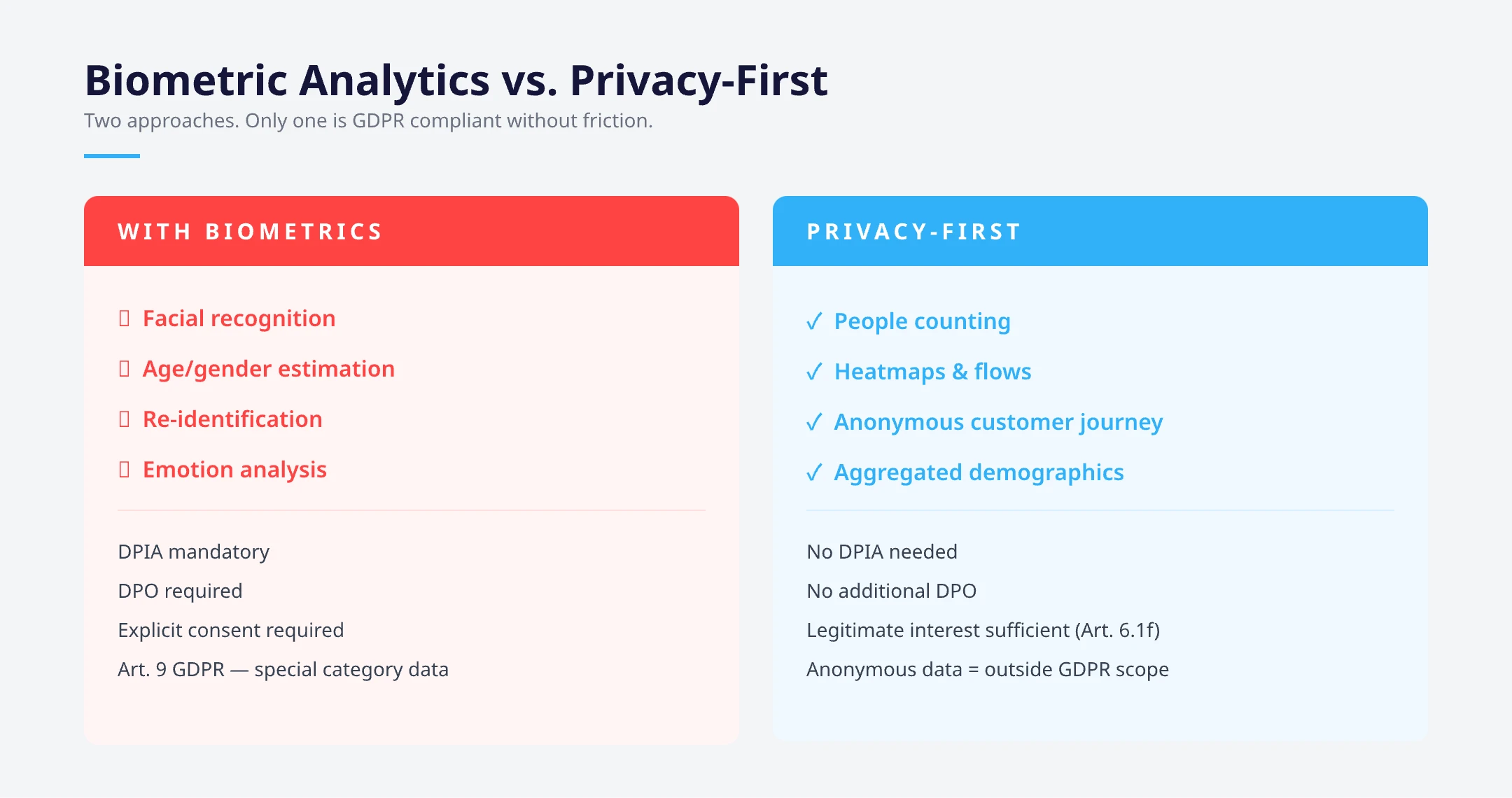

3. The biometrics line: why most retailers cross it unknowingly

Article 9 of the GDPR places biometric data in the “special categories” — the same tier as health data, political opinions, and religious beliefs. Processing biometric data to uniquely identify a natural person is prohibited by default, with only narrow exceptions (explicit consent, substantial public interest, etc.).

Here is where many retailers unknowingly cross the line. Some video analytics platforms use techniques that technically qualify as biometric processing under GDPR definitions:

Generating a unique “signature” from a person’s appearance (clothing, body shape, gait) to track them across cameras or visits. Even without storing a face template, if the system can re-identify a specific individual, it may constitute biometric processing.

Estimating age and gender from facial features. While not “identification” in the traditional sense, the EDPB has indicated that processing facial images to extract demographic data can fall under Article 9 if it involves biometric processing techniques.

Analysing facial expressions to gauge shopper mood or satisfaction. The EU AI Act (effective August 2025) explicitly bans emotion recognition in workplaces and educational institutions, and retail applications face severe restrictions.

Warning: If your video analytics vendor mentions “unique visitor counting,” “return visitor detection,” or “demographic profiling,” ask them exactly how these features work. If the answer involves generating any form of individual signature — even a temporary one — you may be processing biometric data under GDPR Article 9.

4. Three levels of GDPR compliance in video analytics

Not all video analytics carry the same regulatory risk. Understanding where your current setup falls on the compliance spectrum is the first step toward closing gaps.

| Level | Technology | Data processed | GDPR risk | Typical setup |

|---|---|---|---|---|

| Basic CCTV | Recording only | Video footage (personal data) | Medium | Standard security cameras with NVR; legitimate interest basis; signage required |

| Enhanced analytics | AI with individual tracking | Appearance signatures, demographics, re-ID | High | Dedicated sensors or software that creates individual profiles; likely triggers Art. 9 |

| Privacy-first analytics | AI without biometrics | Aggregate counts, flows, heatmaps — no individual signatures | Low | Processes video frames to extract statistics, discards imagery immediately; no Art. 9 trigger |

The critical difference between Level 2 and Level 3 is whether the system generates any data that can be linked back to a specific individual. Privacy-first platforms process video frames to produce aggregate, anonymous metrics — and then discard the raw footage. No templates, no signatures, no profiles. This is the approach that aligns with GDPR’s data minimisation principle (Article 5.1c) by design.

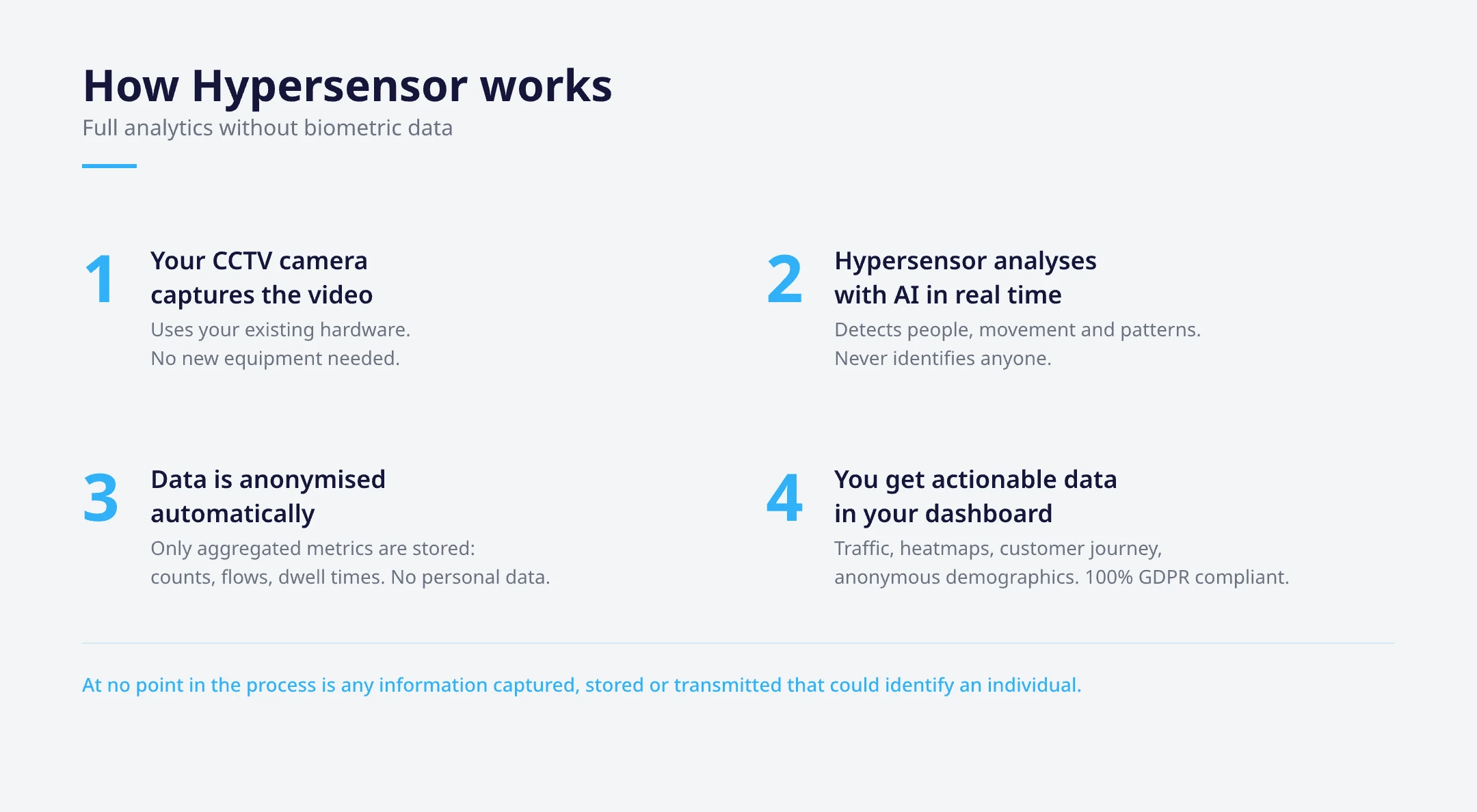

5. Flame Hypersensor: how to do video analytics without biometrics

Flame’s Hypersensor was engineered from the ground up to solve the compliance paradox. It delivers the analytics that shopping malls need — footfall, heatmaps, zone dwell time, queue monitoring, occupancy — while operating entirely below the biometrics threshold defined by the GDPR.

Here is how it works at a technical level:

1

Video frames are analysed locally (on-premise or at the edge). Raw footage never leaves the mall’s infrastructure and is not stored by the analytics platform.

2

The AI model detects the presence of people as objects in the frame. It counts them, tracks movement direction and speed, and measures time spent in defined zones. It does not generate any biometric template, appearance signature, or individual identifier.

3

Within milliseconds, the raw frame is discarded and only aggregate numerical data is transmitted to the cloud dashboard: “Zone A had 347 visitors between 10:00 and 11:00 with an average dwell time of 4.2 minutes.” No image, no video, no personal data.

4

No new cameras or dedicated sensors required. Hypersensor connects to the mall’s existing CCTV infrastructure, reducing both cost and the physical footprint of the data processing operation.

5

There is no database of faces, no library of appearance templates, no mechanism for re-identifying returning visitors through biometric means. The system is architecturally incapable of biometric identification.

Why this matters for compliance: Because Hypersensor never creates data that can identify or re-identify a specific person, the output falls outside the scope of GDPR Article 9 (special categories). The DPIA process is significantly simplified, and the legal basis (legitimate interest) is straightforward to justify. Over 50 shopping malls — including properties managed by Cushman & Wakefield — rely on this approach across Spain, Mexico, the UK, Czech Republic, and beyond.

6. GDPR compliance checklist: 15 items for video analytics in shopping malls

Whether you are deploying a new people counting system or auditing an existing video analytics installation, use this checklist to assess your GDPR readiness.

| # | Compliance item | Category |

|---|---|---|

| 1 | Conduct a DPIA before deploying any video analytics in public areas (Art. 35) | Legal |

| 2 | Document your lawful basis — legitimate interest requires a balancing test (Art. 6.1f) | Legal |

| 3 | Verify the system does not process biometric data — request a written technical declaration from your vendor | Technical |

| 4 | Install visible signage at all entry points with the controller’s identity, purpose, and link to full privacy notice | Transparency |

| 5 | Publish a layered privacy notice — first layer on signage, second layer online with full Art. 13 information | Transparency |

| 6 | Define and enforce retention periods — CCTV footage typically 72 hours max; analytics data can be longer if anonymised | Data management |

| 7 | Implement access controls — restrict who can view live/recorded footage vs. analytics dashboards | Security |

| 8 | Sign a Data Processing Agreement (DPA) with your analytics vendor (Art. 28) | Legal |

| 9 | Verify data transfer mechanisms — if analytics data goes outside the EU/EEA, ensure adequate safeguards (SCCs, adequacy decision) | Legal |

| 10 | Appoint a DPO if large-scale monitoring of public areas is a core activity (Art. 37) | Governance |

| 11 | Establish a process for data subject access requests — people have the right to request footage of themselves | Rights |

| 12 | Ensure data minimisation by design — only collect what is strictly necessary for the stated purpose | Technical |

| 13 | Encrypt data in transit and at rest — both video streams and analytics outputs | Security |

| 14 | Conduct annual compliance reviews — reassess the DPIA when technology or purposes change | Governance |

| 15 | Check EU AI Act obligations — if your system uses AI for real-time analysis of public spaces, classification as “high-risk” may apply | Legal |

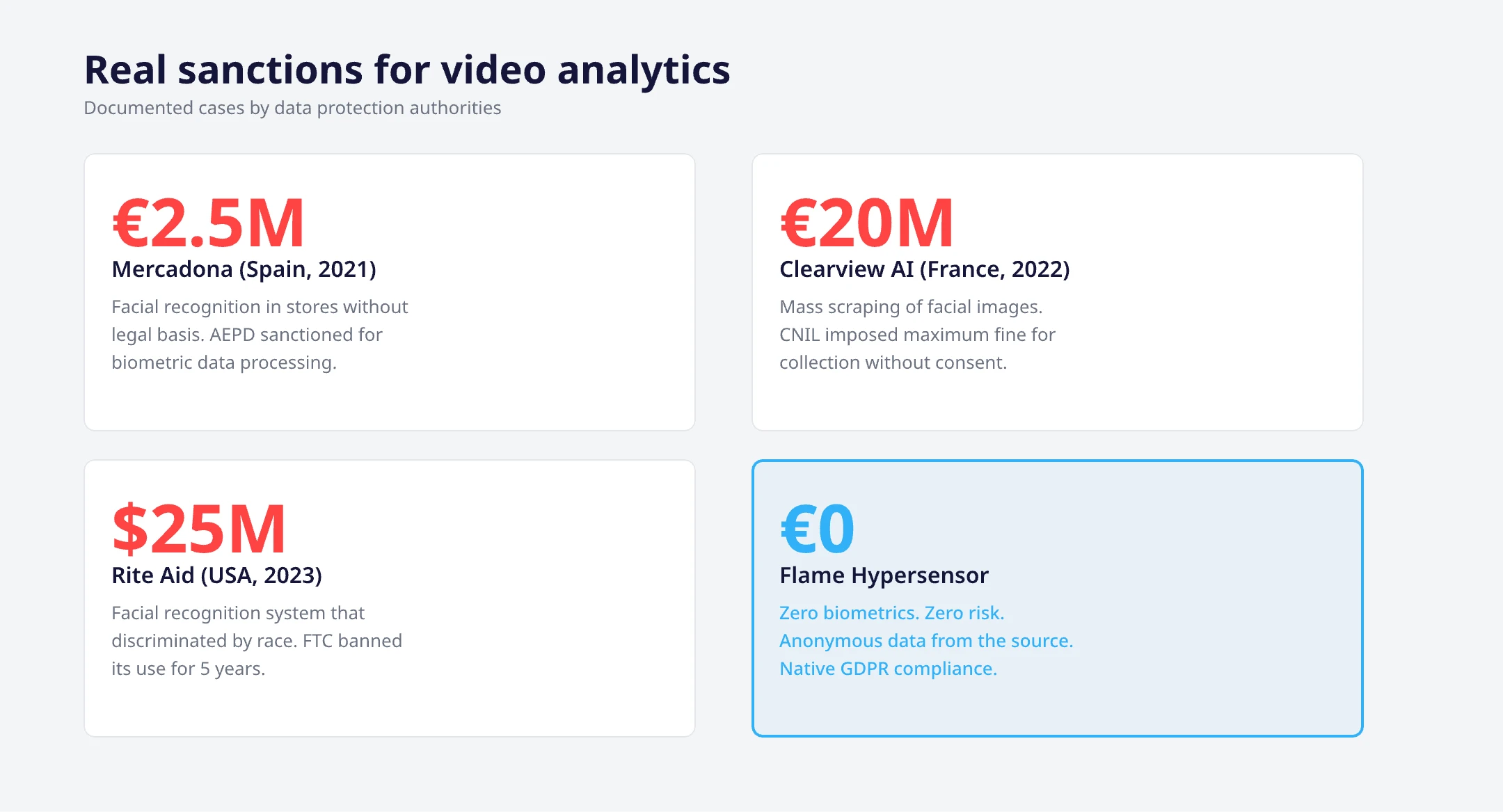

7. Common mistakes that lead to sanctions

Data protection authorities across Europe have issued significant fines related to video surveillance and analytics. Here are the most common pitfalls shopping malls should avoid:

The Spanish DPA (AEPD) fined Mercadona €2.5 million in 2021 for deploying a system in stores without adequate impact assessment. The system processed biometric data without meeting Art. 9 requirements.

Multiple authorities have sanctioned businesses for having CCTV cameras without proper notices. A simple “CCTV in operation” sign is not enough — GDPR requires the controller’s identity, purpose, and reference to the full privacy notice.

Storing CCTV footage for 30, 60, or 90 days “just in case” violates the storage limitation principle. Most DPAs recommend 72 hours maximum for security purposes unless a specific incident requires extended retention.

Mall operators often enable “advanced” analytics features offered by their CCTV vendor — heat mapping, demographic analysis, dwell time — without realising these may involve biometric processing. If the vendor cannot provide a clear technical explanation of how data is anonymised, assume it is not.

If your analytics platform processes personal data on your behalf, you need a Data Processing Agreement under Art. 28. Without it, both the controller (the mall) and the processor (the vendor) are exposed to sanctions.

Pro tip: When evaluating a video analytics vendor for your shopping mall, ask three questions: (1) Does the system generate any individual-level identifier, even temporarily? (2) Where is video data processed and is any footage transmitted off-site? (3) Can you provide a pre-completed DPIA template for your platform? A privacy-first vendor will have clear, documented answers to all three.

8. Frequently asked questions

Is people counting with video cameras GDPR-compliant?

Yes, when done correctly. People counting that produces only aggregate numbers (e.g., “142 visitors entered between 14:00 and 15:00”) without identifying or tracking individuals is compatible with GDPR. The key is that the system processes video frames to extract counts and immediately discards the imagery, never generating personal data as output. A DPIA is still recommended given the use of cameras in public spaces.

Do I need consent to use video analytics in a shopping mall?

Not necessarily. Most video analytics in retail rely on “legitimate interest” (Art. 6.1f) rather than consent, since obtaining explicit consent from every visitor entering a mall is impractical. However, you must conduct a legitimate interest assessment (LIA), ensure proper signage, and demonstrate that the processing does not override visitors’ rights and freedoms. If your system processes biometric data (Art. 9), legitimate interest alone is insufficient — explicit consent or another Art. 9 exemption is required.

What is the difference between GDPR and the EU AI Act for video analytics?

The GDPR regulates the processing of personal data regardless of the technology used. The EU AI Act (Regulation 2024/1689) specifically regulates AI systems by risk level. Real-time biometric identification in publicly accessible spaces is banned outright under the AI Act. AI-based video analytics that do not involve biometric identification may still be classified as “high-risk” under Annex III, requiring conformity assessments, transparency obligations, and human oversight. Both regulations apply simultaneously — compliance with one does not guarantee compliance with the other.

Can I use heatmaps in my shopping mall without violating GDPR?

Yes. Heatmaps that show aggregate foot traffic patterns across zones — without tracking or identifying specific individuals — are fully compatible with GDPR. The technology counts the density of people in defined areas over time and produces a visual overlay. As long as the underlying system does not generate individual trajectories linked to biometric or personal identifiers, heatmaps represent anonymised statistical output. Flame’s Hypersensor generates heatmaps using exactly this approach.

How long can I store video analytics data under GDPR?

It depends on what type of data. Raw CCTV footage containing identifiable individuals should typically be retained for no more than 72 hours (per most EU DPA guidance), unless a specific security incident justifies longer retention. Anonymised analytics data — aggregate counts, heatmaps, dwell time averages — is not personal data and therefore falls outside GDPR retention limits entirely. You can store anonymised analytics data indefinitely for trend analysis and benchmarking.

Ready for GDPR-compliant video analytics?

Discover how Flame Hypersensor delivers footfall, heatmaps, and occupancy data for your shopping mall — with zero biometric processing. Trusted by 50+ malls worldwide.

People Counting

People Counting Conversion Analytics

Conversion Analytics Customer Behavior

Customer Behavior Occupancy Management

Occupancy Management Queue Analytics

Queue Analytics Restroom Management

Restroom Management Guest Wifi Marketing

Guest Wifi Marketing Corporate WiFi Access

Corporate WiFi Access Retail

Retail Shopping malls

Shopping malls Hospitality

Hospitality Public Venues

Public Venues